MySQL Shell is the popular tool to work with MySQL and it integrates perfectly everything for MySQL Database Service (MDS) in Oracle Cloud Infrastructure (OCI).

For any logical dump and load of data and especially to dump data to MDS, MySQL Shell Utility is the recommended solution.

MySQL Shell Dump & Load is faster, is parallel and compatible with OCI (block storage, MDS grants, automatic primary key creations, ...)

This post is about compression.

By default, MySQL Shell Dump uses zstd compression.

Zstd, short for Zstandard, is a fast lossless compression algorithm, targeting real-time compression scenarios at zlib-level compression ratio.

It's possible to specify the compression algorithm to use for MySQL Shell Dump Utility with the compression option.

The accepted values are:

- none

- zstd (default)

- gzip

If you plan to use compression and multi-threads (parallel), it's recommended to have a powerful instance for MySQL Shell.

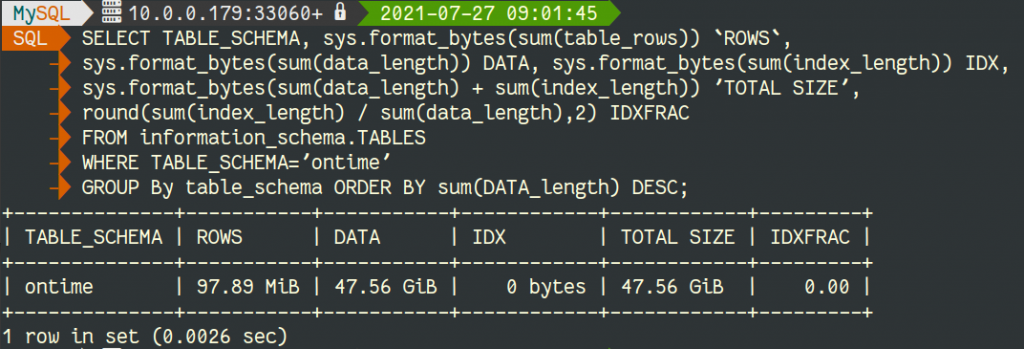

To compare the compression algorithms and the old mysqldump, I use a server with 8 cores (Intel(R) Xeon(R) Platinum 8167M CPU @ 2.00GHz - VM.Standard2.4 on OCI). The data is one single table of 47GB InnoDB Data !

Dumping Data

The default parallelism of MySQL Shell is to use 4 threads.

If you have large dataset to dump & load, I recommend to have a machine with more cores and specify more threads with the threads option like:

JS util.dumpSchemas(["ontime"], "/home/opc/dump_zstd",{"threads": 32})MySQL Shell with Zstd

Let's start with MySQL Shell Dump and zstd (the default):

JS util.dumpSchemas(["ontime"], "/home/opc/dump_zstd",{"compression": "zstd"})

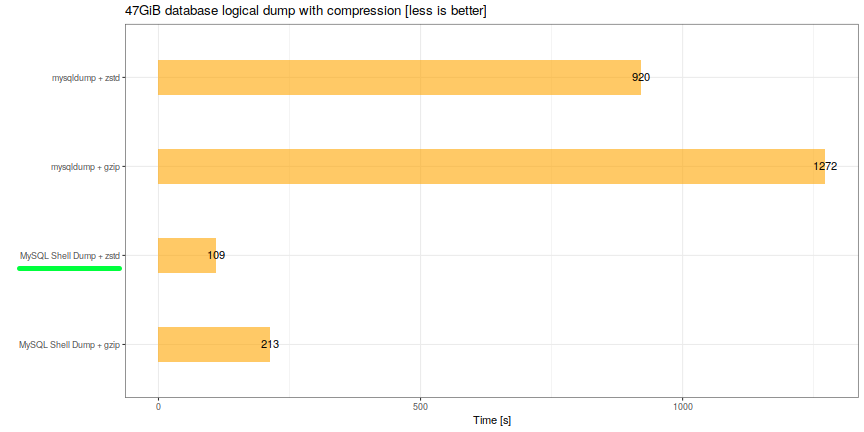

<strong>Duration: 00:01:49s</strong>

Schemas dumped: 1

Tables dumped: 1

Uncompressed data size: 34.64 GB

Compressed data size: 3.03 GB

Compression ratio: 11.4

Rows written: 105336666

Bytes written: 3.03 GB

Average uncompressed throughput: 317.36 MB/s

Average compressed throughput: 27.80 MB/sMySQL Shell with gzip

Now, we try the same with gzip as compression method:

JS util.dumpSchemas(["ontime"], "/home/opc/dump_gzip",{"compression": "gzip"})

<strong>Duration: 00:03:33s</strong>

Schemas dumped: 1

Tables dumped: 1

Uncompressed data size: 34.64 GB

Compressed data size: 4.22 GB

Compression ratio: 8.2

Rows written: 105336666

Bytes written: 4.22 GB

Average uncompressed throughput: 162.36 MB/s

Average compressed throughput: 19.76 MB/smysqldump

As I already claimed, mysqldump (and mysqlpump), should not be used anymore, but just for info, mysqldump+gzip took 21m12sec and mysqldump+zstd took 15m20sec.

Results

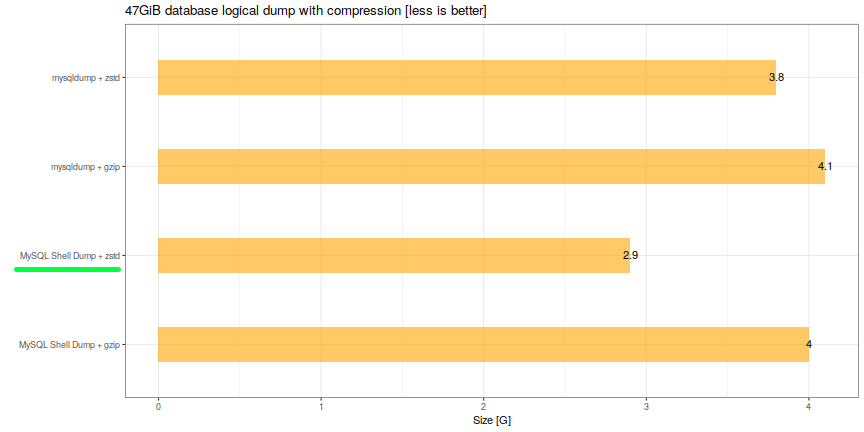

If we check the size of the dump and the speed, it's obvious that MySQL Shell with Zstd (compression ratio of 11.1) is the best option:

[root@mysql-shell opc]# du -sh *

4.0G dump_gzip

<strong>2.9G dump_zstd</strong>

4.1G mysqldump_gzip.sql.gz

3.8G mysqldump_zstd.sql.zst

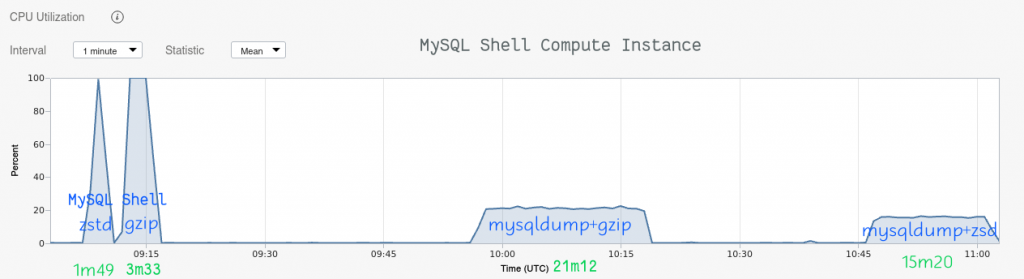

As I wrote earlier, the power of the MySQL Shell machine matters. We can compare the CPU usage:

MySQL Shell uses all the power of the instance but this is of course not a problem on a dedicated instance for such operation.

Conclusion

By default MySQL Shell Dump & Load utility provides a very good compression algorithm that can save you a lot of disk space. Additionally, with it's parallelism, the Load & Dump is much faster than the traditional mysqldump. MySQL Shell can dump AND load in parallel even in a single table !

As you could notice, we started with 47GB and finished with 3GB of data, which is a compression of 93% !

If you are looking for a logical dump solution, MySQL Shell is the best option.

Thank you for using MySQL and MySQL Shell.